Both AI and cognitive science can gain by studying the human solutions to difficult computational problems [1]. My talk will focus on two problems: concept learning and question asking. Compared to the best algorithms, people can learn new concepts from fewer examples, and then use their concepts in richer ways -- for imagination, extrapolation, and explanation, not just classification. Moreover, learning is often an active process; people can ask rich and probing questions in order to reduce uncertainty, while standard active learning algorithms ask simple and stereotyped queries. I will discuss my work on program induction as a cognitive model and potential solution for extracting richer concepts from less data, with applications to learning handwritten characters [2] and learning recursive visual concepts from examples. I will also discuss program synthesis as a model of question asking in simple games [3]. [1] Lake, B. M., Ullman, T. D., Tenenbaum, J. B., and Gershman, S. J. (2016). Building machines that learn and think like people. Preprint available on arXiv:1604.00289. [2] Lake, B. M., Salakhutdinov, R., and Tenenbaum, J. B. (2015). Human-level concept learning through probabilistic program induction. Science, 350(6266), 1332-1338. [3] Rothe, A., Lake, B. M., and Gureckis, T. M. (2016). Asking and evaluating natural language questions. In Proceedings of the 38th Annual Conference of the Cognitive Science Society.

Overview

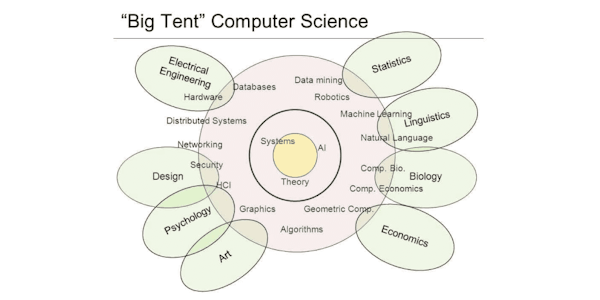

Syllabus

Introduction.

How do people learn such rich concepts from very little data?.

Learning to recognize objects in computer vision: Deep neural networks and big data.

active learning for people and machines.

Outline Concept learning.

Concepts and questions as programs.

Standard machine learning.

human-level concept learning.

Compositionality Representations are constructed through a combination of parts primitivos..

Causality The generative model captures at an abstract level aspects of the real.

Bayesian Program Learning (BPL).

Generate a new example.

Visual Turing Test - Generating new examples.

One-shot classification performance After all models pro-trained on 30 alphabets of characters.

If the mind can write programs to represent concepts, what are the limits?.

Causality influences perception.

Pre-trained AlexNet (deep convolutional network).

Results - Experiment 1 - Classification.

Why is the model better than people? A failure of search (mixing)?.

Experiment 2 - Generation.

13 different fractal concepts.

human-level active learning.

Experiment 1: Free-form question asking.

Results: generated questions.

Experiment 2: Evaluating questions for quality.

A 'Yardstick' for question quality.

People are good at evaluating questions.

Can we build machines that ask richer, more human-like questions during learning?.

Compositionality in question structure.

A grammar that produces questions.

Taught by

Stanford Online