A Look at Coursera’s In-Course Data-Driven Features

A look at the in-course features that Coursera has developed using data collected from their learners.

In the early days, when MOOCs started getting popular, there was a lot of talk about how data collected from millions of learners would help improve online learning and, potentially, learning in general.

In August 2012, Coursera co-founder Daphne Koller gave a Ted Talk titled What we’re learning from online education. Here’s part of the talk’s description:

“With Coursera, each keystroke, quiz, peer-to-peer discussion and self-graded assignment builds an unprecedented pool of data on how knowledge is processed.”

She speculated that this data could potentially solve Bloom’s 2 sigma problem: offering one-on-one support to learners at scale to help them truly master the learning content.

Almost eight years later, we have 1,000 universities offering 15,000 online courses. The MOOC formula has been polished, but the product remains essentially the same.

However, platforms like Coursera have built features powered by data collected from their learners. Generally, these are geared toward two objectives:

- Guiding learners to the right program — for instance, tailoring the offerings shown to each learner according to their interests.

- Leveraging data to support the learning experience — often through just-in-time “interventions” designed to encourage course progress.

In this article, I’ll focus on the second category. Specifically, I’ll focus on features that are visible to learners. I’ll do my best to introduce them in the simplest way possible, minimizing marketing speak and providing numbers when available.

To this end, I went through various Coursera blog posts and video presentations, and I tried to experience the features myself on Coursera’s platform. Personally, as a Coursera learner since 2012, I haven’t seen much benefit from these features.

In-Course Help System

Coursera announced this feature two years ago and called it automated coaching.

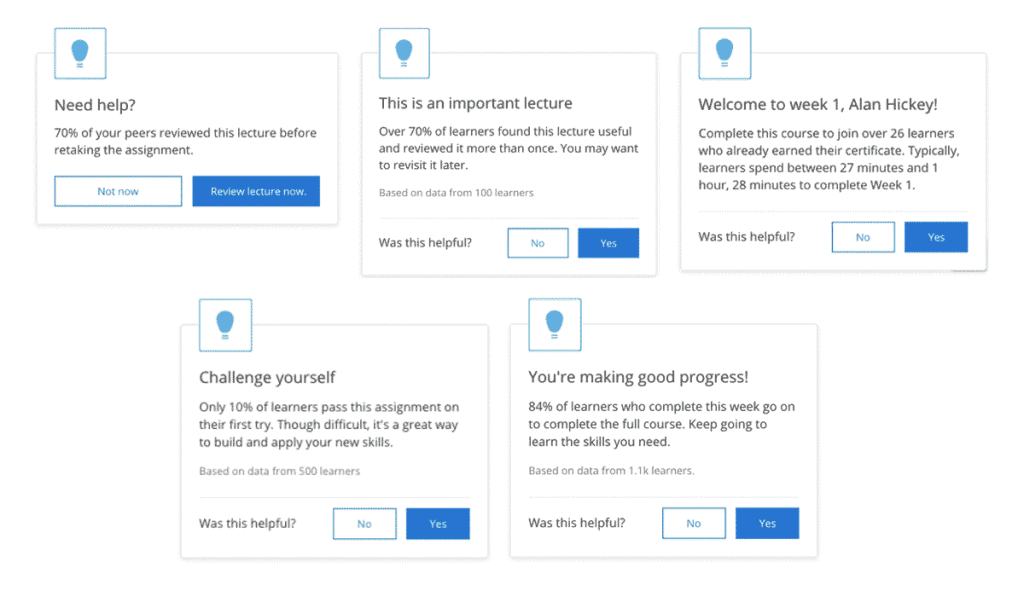

As learners progress through a course, they receive notifications in the form of pop-up messages or emails. Coursera calls these “interventions.” They come in different forms: some are there to encourage learners; others, to emphasize important lessons.

For this, the company is leveraging its large dataset to build machine learning models, that help deliver these messages at the appropriate time.

According to Coursera, automated coaching leads to a 12% increase in item completions.

Smart Reviews

When learners make a mistake in a quiz, they’re pointed to relevant learning material. Some instructors were already doing this manually: adding links to appropriate course content in their feedback to quiz questions.

Coursera now automated this process to match quiz questions with specific course content, by identifying what content helped learners pass quizzes they’d previously failed, and by collecting learners’ feedback regarding the relevance of the suggested material.

Originally, instructors had manually tagged 5% of Coursera’s 300,000 quiz questions. With the new approach, Coursera claims having now tagged 60% of questions.

Machine-Assisted Peer Review

Back when I took Learning How to Learn, I remember having to wait about a week for one of my peer-graded assessments, despite the course being one of the most popular on Coursera.

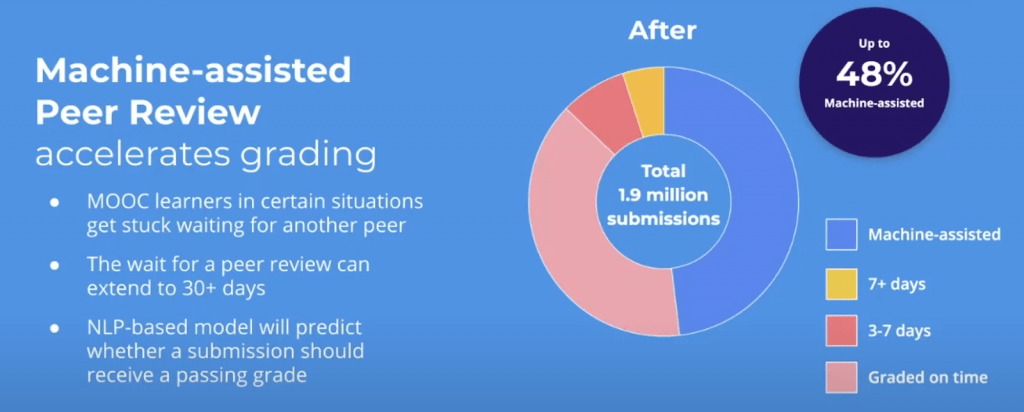

To try to address this issue, Coursera has announced that they will launch machine-assisted peer reviews later this year. The feature will leverage machine learning models trained on manually graded submissions — that either passed or failed — to automatically mark new learner submissions.

Coursera claims that the models will be fast and accurate enough to handle 48% of the 1.9 million unstructured text submissions they receive annually — thereby speeding up grading and allowing human reviewers to focus on assignments that require more nuanced scoring.

Tags

Christy

The interventions are amazingly annoying especially when they tell me I can finish a course that I have baan mentoring for 6 years.

Plagiarism, submission to submission is rampant and although we have asked Coursera for years to install a plagiarism checker, nothing has been done. There are such simple tricks for copying submissions.

Peer Review Assignments are a silly term. It refers to scientific peer reviews , here there are lots of lazy, uninterested, uneducated people reviewing as well as malicious ones. We have aske Coursera time and again to install a flagging tool for reviews, again….nothing.

With unlimited submissions grades and certificates are worthless

M.E.

Where did you get the image with the statistics for their machine-assisted peer review and where did Coursera announce this?

Dhawal Shah

You can find it in this video from Coursera Partners Conference: https://www.youtube.com/watch?v=oVXBL8Zv0uU&feature=youtu.be

M.E.

Thank you!